|

Hi Yuhao,

Since ram is relatively inexpensive, if you have another 64GB

laying around, why don't you stick them in to make it total 128GB

to serve your 482TB?

From what I have read it appears there is a general recommendation

1GB Ram / 1 TB Disk

Our setup here we are using a Raid card to setup RAID 10 and present

it to gluster as a single brick. I have seen issue if i directly

write to the RAID 10, I would get around 250MB/sec, via gluster

mounted volume 100MB and if i put NFS on top it would reduce to

30MB/sec

Regards,

Edy

On 8/8/2018 1:49 PM, Yuhao Zhang wrote:

Hi Xavi,

Thank you for the suggestions, these are extremely

helpful. I haven't thought it could be ZFS problem. I went back

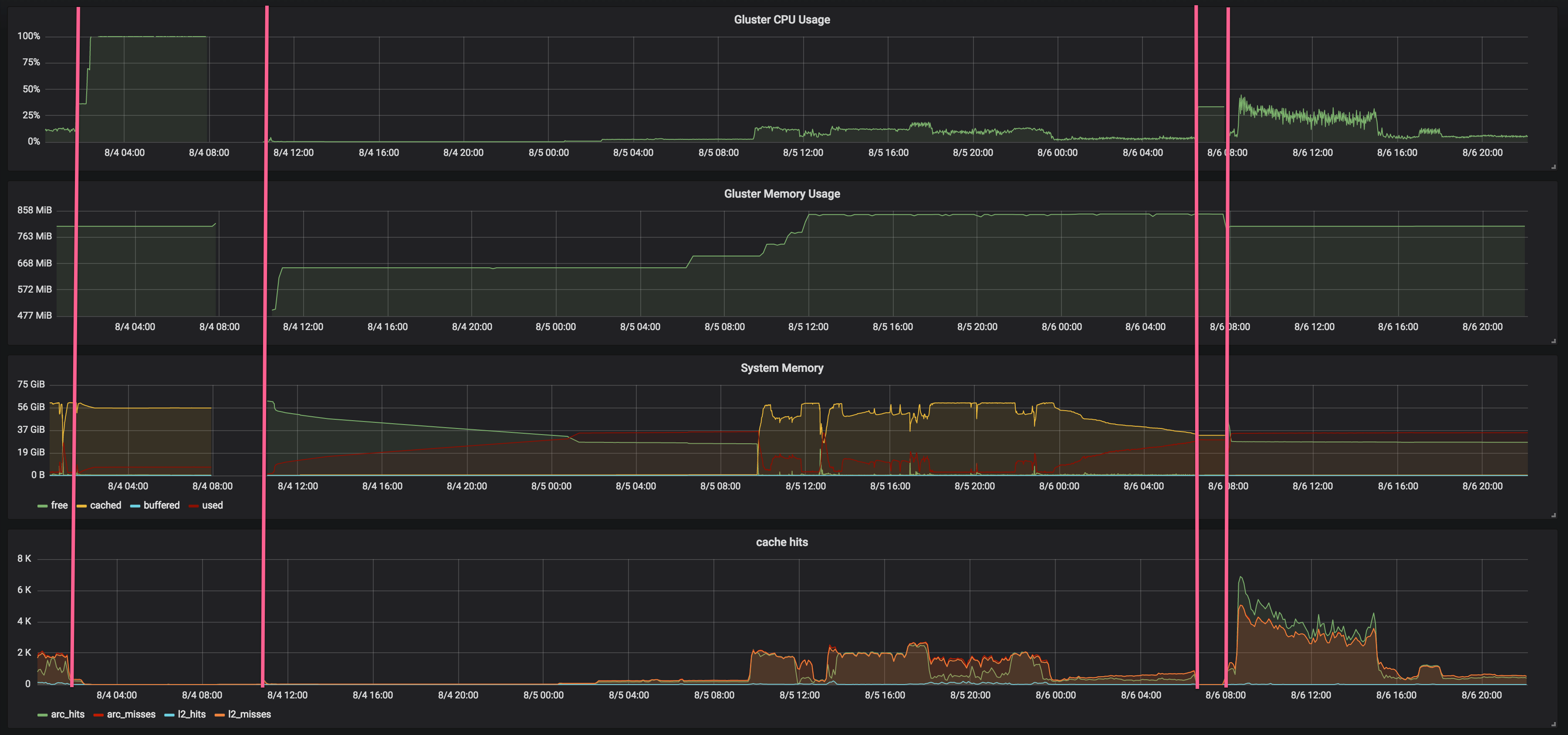

and checked a longer monitoring window and now I can see a

pattern. Please see this attached Grafana screenshot (also

available here:� https://cl.ly/070J2y3n1u0F�. Note

that the data gaps were when I took down the server for

rebooting):

Between 8/4 - 8/6, I tried two transfer tests, and

experienced 2 the gluster hanging problems. One during the

first transfer, and another one happened shortly after the

second transfer. I blocked both in pink lines.�

Looks like during my transfer tests, free memory was almost

exhausted. The system has a very high cached memory, which I

think was due to ZFS ARC. However, I am under the impression

that ZFS will release space from ARC if it observes low system

available memory. I am not sure why it didn't do that.�

I did't tweak related ZFS parameters.�zfs_arc_max was set

to 0 (default value). According to doc, it is "Max arc size of

ARC in bytes. If set to 0 then it will consume 1/2 �of �system

RAM." So it appeared that this setting didn't work.

When the server was under heavy IO, the used memory was

instead decreased, which I can't explain.

May I ask if you, or anyone else in this group, has

recommendation on ZFS settings for my setup? My server has

64GB physical memory and 150GB SSD space reserved for

L2_ARC.The zpool has 6 vdevs and each has 12TB * 10 hard

drives on raidz2. Total usable space in the zpool is 482TB.

Thank you,

Yuhao

Hi Yuhao,�

Hello,

I just experienced another hanging one hour ago

and the server was not even under heavy IO.

Atin, I attached the process

monitoring results and another statedump.

Xavi, ZFS was fine, during the

hanging, I can still write directly to the ZFS

volume. My ZFS version: ZFS: Loaded module

v0.6.5.6-0ubuntu16, ZFS pool version 5000, ZFS

filesystem version 5

I highly recommend you to

upgrade to version 0.6.5.8 at least. It fixes a kernel

panic that can happen when used with gluster. However

this is not your current problem.

Top statistics show low

available memory and high CPU utilization of kswapd

process (along with one of the gluster processes).

I've seen frequent memory management problems with

ZFS. Have you configured any ZFS parameters? It's

highly recommendable to tweak some memory limits.

If that were the problem,

there's one thing that should alleviate it (and see if

it could be related):

echo 3

>/proc/sys/vm/drop_caches

This should be done on all

bricks from time to time. You can wait until the

problem appears, but in this case the recovery time

can be larger.�

I think this should fix the

high CPU usage of kswapd. If so, we'll need to tweak

some ZFS parameters.

I'm not sure if the high CPU

usage of gluster could be related to this or not.

Xavi

_______________________________________________

Gluster-users mailing list

Gluster-users@xxxxxxxxxxx

https://lists.gluster.org/mailman/listinfo/gluster-users

|