Hello List,

i made a mistake, draining a host instead of entering it into Maintenance Mode (for OS reboot). :-/

After "Stop Drain" and restore of original "crush reweight" values, so far everything looks fine.

cluster:

health: HEALTH_OK

services:

[..]

health: HEALTH_OK

services:

[..]

osd: 79 osds: 78 up (since 3h), 78 in (since 6w); 166 remapped pgs

[..]

And some minor objects being misplaced..

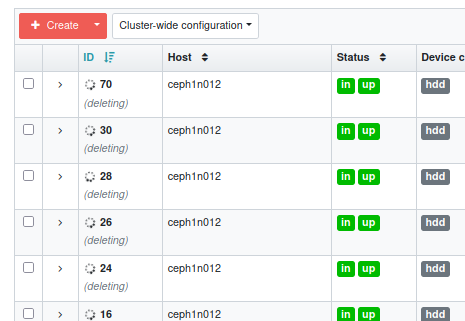

But I can't remove/solve this annoying message "deleting" on these host OSDs.

Does someone have a hint for me?

Thanks,

Christoph

_______________________________________________ ceph-users mailing list -- ceph-users@xxxxxxx To unsubscribe send an email to ceph-users-leave@xxxxxxx