My initator is also VMware software iscsi. I had my tgt iscsi targets' write-cache setting off.

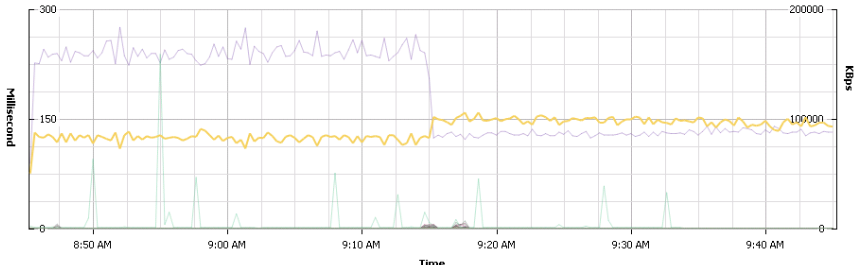

I turned write and read cache on in the middle of creating a large eager zeroed disk (tgt has no VAAI support, so this is all regular synchronous IO) and it did give me a clear performance boost.

Not orders of magnitude, but maybe 15% faster.I turned write and read cache on in the middle of creating a large eager zeroed disk (tgt has no VAAI support, so this is all regular synchronous IO) and it did give me a clear performance boost.

I'm pretty sure this IO (zeroing) is always 1MB writes, so I don't think this caused my write size to change. Maybe it did something to the iSCSI packets?

Jake

On Fri, Mar 6, 2015 at 9:04 AM, Nick Fisk <nick@xxxxxxxxxx> wrote:

From: ceph-users [mailto:ceph-users-bounces@xxxxxxxxxxxxxx] On Behalf Of Jake Young

Sent: 06 March 2015 12:52

To: Nick Fisk

Cc: ceph-users@xxxxxxxxxxxxxx

Subject: Re: tgt and krbd

On Thursday, March 5, 2015, Nick Fisk <nick@xxxxxxxxxx> wrote:

Hi All,

Just a heads up after a day’s experimentation.

I believe tgt with its default settings has a small write cache when exporting a kernel mapped RBD. Doing some write tests I saw 4 times the write throughput when using tgt aio + krbd compared to tgt with the builtin librbd.

After running the following command against the LUN, which apparently disables write cache, Performance dropped back to what I am seeing using tgt+librbd and also the same as fio.

tgtadm --op update --mode logicalunit --tid 2 --lun 3 -P mode_page=8:0:18:0x10:0:0xff:0xff:0:0:0xff:0xff:0xff:0xff:0x80:0x14:0:0:0:0:0:0

>From that I can only deduce that using tgt + krbd in its default state is not 100% safe to use, especially in an HA environment.

Nick

Hey Nick,

tgt actually does not have any caches. No read, no write. tgt's design is to passthrough all commands to the backend as efficiently as possible.

http://lists.wpkg.org/pipermail/stgt/2013-May/005788.html

The configuration parameters just inform the initiators whether the backend storage has a cache. Clearly this makes a big difference for you. What initiator are you using with this test?

Maybe the kernel is doing the caching. What tuning parameters do you have on the krbd disk?

It could be that using aio is much more efficient. Maybe built in lib rbd isn't doing aio?

Jake

Hi Jake,

Hmm that’s interesting, it’s definitely effecting write behaviour though.

I was running iometer doing single io depth writes in a windows VM on ESXi using its software initiator, which as far as I’m aware should be sending sync writes for each request.

I saw in iostat on the tgt server that my 128kb writes were being coalesced into ~1024kb writes, which would explain the performance increase. So something somewhere is doing caching, albeit on a small scale.

The krbd disk was all using default settings. I know the RBD support for tgt is using the librbd sync writes which I suppose might explain the default difference, but this should be the expected behaviour.

Nick

_______________________________________________ ceph-users mailing list ceph-users@xxxxxxxxxxxxxx http://lists.ceph.com/listinfo.cgi/ceph-users-ceph.com